TECHNICAL SEO

What is Technical SEO?

Technical SEO is the process of improving and optimizing your core technology stack and underlying website infrastructure to perform better for search engines. It focuses primarily on backend processes like indexing, crawlability, accessibility, performance, architecture, security, structured data, and AMP.

Why Technical SEO is important?

Easy answer would be because its technical, well that would be half of the answer. Search Engines like Google, Bing or Yandex are networks and they use crawler bots to curate this massive database and keep them ready in their index just like a book, in computer science one of the first idea that is taught is how computer makes data useful by turning them into information, this makes it easier to turn data to information and distribute faster thereby reducing the time to bring best, recent and relevant results to the search query, helping achieve a good user experience, Google is very committed to user experience one of the reason they are the biggest network and practice and preach towards best practices that reduce latency through performance improvements, now when you move over 70% of the global traffic through its system, a simple collecting curating and indexing along with the infrastructure to measure and calibrate, is a 30 billion affair which is climbing with the AI snippet and how technical SEO is the saving grace for them and us.

This brings us to crawl budget and value established by search engines to execute the above process effectively, unit economics of search engine, for smaller sites this budget can fallout faster considering the value a small site brings to the table can vary, now search engine do not necessarily understand context and rely heavily on matching search terms to the most relevant, authoritative content and this is calibrated basic user experience arrived from markers such as Click Through Rate, Dwell Time, Bounce Rate etc. emphasizing the importance of overall SEO, On-Page, Off Page and Technical. For the purpose we will be dwelling on elements that tie the above markers to the value of crawl ROI and Site Architecture and Performance hold a huge rewards from search engine, an incentive by promoting better visibility and exposure.

Technical SEO is one of the most under valued SEO part because seldom do we look under the hood and when we do its can get complex. Getting familiar with the best practice and techniques and strategies can come handy to keep ahead of the curve.

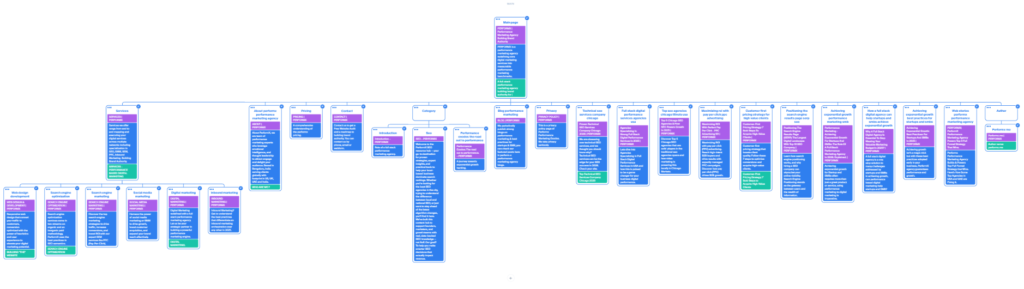

9 PILLARS OF

TECHNICAL SEO

A complete visual framework of info cards to help optimize crawlability, indexing, performance, and search visibility

1 CRAWLABILITY

Robots.txt → controls crawler access → prevents irrelevant pages from being indexed

Broken internal links → ensures all pages are reachable → preserves link equity

Redirect chains/loops → maintains smooth crawling → prevents link dilution & errors

HTTP status codes → 200/404/500 monitoring → ensures successful page access

XML Sitemap → guides crawlers → speeds up indexing of important pages

2 INDEXING

Canonical tags → avoids duplicate content → consolidates link equity

Noindex tags → prevents low-value pages from ranking → keeps SEO focused

Duplicate content → resolves ranking conflicts → improves SERP clarity

Orphan pages → ensure all pages are linked → maximizes crawl coverage

Sitemap coverage → verifies all pages included → guarantees visibility

3 SITE ARCHITECTURE

URL structure → clear & descriptive → improves crawlability & UX

Internal linking depth → reduces buried pages → distributes link authority

Breadcrumb navigation → shows hierarchy → helps Google understand structure

Silo structure → groups related content → strengthens topical relevance

Navigation hierarchy → accessible site → enhances UX & crawling

4 PAGE SPEED & CORE WEB VITALS

Largest Contentful Paint (LCP) → page load speed → reduces bounce rate

First Input Delay (FID) → interactivity → improves UX & engagement

Cumulative Layout Shift (CLS) → visual stability → prevents UX frustration

Time to First Byte (TTFB) → server response → enhances crawl efficiency

JS execution time → fast rendering → optimizes load & indexing

5 MOBILE OPTIMIZATION

Responsive design → works on all devices → required for mobile-first indexing

Mobile viewport → proper scaling → ensures mobile usability

Mobile usability errors → button sizes & spacing → improves UX

Mobile page speed → fast mobile load → reduces bounce & rankings drop

6 STRUCTURED DATA

Schema markup → rich results → enhances CTR & visibility

Schema errors → fix invalid markup → maintains eligibility for SERP features

FAQ/Product/Event schema → contextual info → improves search experience

Rich result eligibility → more SERP real estate → higher clicks

7 SECURITY

HTTPS → secure browsing → ranking factor & user trust

SSL certificate → valid SSL → avoid browser warnings

Mixed content errors → fully secure site → maintains credibility

Security headers → protect site → prevent hacking & downtime

8 INTERNATIONAL SEO

Hreflang → correct language targeting → avoids duplication

Localized content → target country/language → improves SERP relevance

Regional domains → proper domain structure → avoids content cannibalization

9 VIDEO & MEDIA SEO

Video schema → rich snippets → improves visibility

Video sitemaps → ensures indexation → improves search coverage

Transcripts & captions → improves accessibility → enhances engagement

Technical SEO best practiceS

Keeping up with the Search Engine Dynamics can be an exhausting list of over 150 markers that we have condensed and listed, downloadable checklist, alternatively this are the best practices for staying safe than sorry.

Site Architecture and Navigation:

Maintaining tropical hierarchy helps to go deeper with content structures and keeps link hygiene in check. This helps user navigate and find useful content faster from the site layout and helps crawler move through topics and sub topics. Keep a clear and clean representation of subject and topics and include the main pages to drive interest and learning

The technology is a direct influence to performance, larger sites when crawled slows down the site while smaller sites stay unaffected, hosting, server location, cache mechanism, image optimization all play a role.

Hosting Infrastructure:

When hosting on shared or Virtual Servers, shared hosting doesn’t present direct access to root directory and may even give limited access to redirects and robots.txt files for crawl and flip through them, google tends to crawl through all version and pick one over the other. Also ensure your hosting is in an isolated container so that the code does not interact with one another or database not mixed.

Sitemap:

Use both an XML sitemap and an HTML Sitemap, XML is for search engines to read and HTML is for humans to navigate and adds to user experience, for larger sites its imperative and for smaller site it still ads value. Some SEO Plugin come with the feature to submit to index but its always the best practice to also submit it with Google Search Console, just in case. Its also is one of only times you would check if the sitemap is been crawled.

NOTE: Google Search Console formerly (Webmaster Tools) requires a google account to sign in, it can be your regular gmail.com or you@example.com associated with domain as you will be asked to verify the domain property.

Redirection and Status Codes:

Redirect are a huge crawl budget busters search engine spend on not useful links that lead to 404 pages and redirects that hold space in indexing are a markdown and wastage that could have been avoided, maintaining link hygiene by redirecting old content pages to relevant new one is another best practice. When a indexed page is served as a 404 this results in bad user experience and the hassle of been crawled and indexed its not so much so the budget but the experience of the user agents, 404 is not all bad, it indicates to search engines the content is moved or no more available.

Build for Mobile First:

Mobile traffic accounts to over 56% of traffic and google approach to rendering the mobile version is solidified with a full transition to indexing first and only on mobile devices and the rendering on mobile will become primary with missing elements on desktops to be the secondary, the miss match of missing data on desktops would get ignored for indexing and may even be left out of crawl this brings up another question, what if you do not have a mobile version, the desktop version will be used.

The CrUX-The Chrome User Experience is hence derived from Core Web Vitals data upon achieving minimum threshold for data set. Before this stage the page speed insight continues to be the measurement guide.

Why am I not see any CWV reports?

Core Web Vitals requires a minimum threshold over a period of time to appear with average performance and experience over 90 days with user consent on chrome browser. A dataset as large as 1000 could be the best probability.

HTTP-S and HSTS:

Security is a primary concern for trust and a http version of the site will be flagged and causing doubts in the search experience and a big no. Using SSL across your property is a must and serving the only version to all user will help avoid cloaking signals and even attract a penalty. The latest in browser security is HSTS – HTTP Strict Transport Policy- This mechanism allows a site to connect through a browser only via a secure HTTPS route thus mitigating cross browser, man in the middle, protocol and cookie hijack attacks.

This is a policy and could be enrolled after enabling the HSTS via the server, this makes the server to communicate with the user in a stipulated timeframe. The code should be placed before WordPress or is executed on the server.

Why HSTS policy been introduced?

Hyper Text Transfer Protocol is the standard connection protocol and the S is the security from SSL it indicates the site is served over HTTPS, how ever when a site first connects it heads the HTTP and SSL denies the connection and asks to connect via HTTPS but the return is vulnerable to what is called man in the middle attacks and hence HSTS becomes the norm, this policy is enforced only after the first user visit and hence enrolling into the HSTS Preload List, when a domain is on this list, the browser skips the initial request and encrypts all communication immediately. This is top priority metric for technical SEO.

Canonicals:

The HTTP version of a page should have a canonical that points to its HTTPS equivalent. This is a best practice for modern SEO and website security. Canonicals are used for anchoring to pages to help avoid duplicate content issues, one main page can use many canonicals with similar content help avoid the confusion of the search engine interpreting them as duplicate content.

Technical SEO vs On-Page SEO vs Off-Page SEO

This is the most important part. Technical SEO is not separate, it supports and strengthens both on-page and off-page SEO. It works best when all three parts work together, technical SEO ensures your content is seen and understood.

Backlinks are powerful-but only if your site is technically sound. and it ensures your authority is properly used and distributed.

Technical SEO affects BOTH of the others directly.

Controls visibility → your content may never appear affecting On Page.

Controls performance → your content may not rank, another On Page

Controls how authority flows → your site may not compete-Off-Page SEO

Best Order to Work on SEO

If you are building or improving a website, follow this order:

Step 1: Fix Technical SEO – Ensure crawling and indexing is done right, check if performance is acceptable and fix errors.

Step 2: Improve On-Page SEO: Write better content by improving previous thoughts and including new learning and market best practices. Optimize keywords- Identify performance keywords and low hanging fruits and build improve site structure and navigation with align relevant content.

Step 3: Build Off-Page SEO: Get backlinks – Get in backlinks can mean earn, acquire, receive (voluntarily/involuntarily), get lucky. Promote your content and increase social signals. Press Release on product updates, collaboration efforts, basically find a good enough business reason to stay in the new.

Why Technical SEO Services are Now More Important Than Ever.

AI snippets are helpful information gathered from the reliable and relevant sources, now the content can appear only if that portion of the subject exist and is found to be answered in a strcutured manner giving the ability for them answers to appear useful to a search query, the featuted snippets are getting comprehensive and featured with-in them in sets of top 3 visible and top 10 total, this includes,high authority and relevance, video explantion of how to, prodcuts that allign, this is not etteched in stone and combination appears to be curated for personalised and local search.

How Technical SEO is improving AEO and GEO

Answer Engine Optimization and Generative engine optimization weigh heavily into technical SEO by leveraging structured data and proper schematic implementation.

Search has changed a lot and fast. Earlier, people used search engines to click links and visit websites.

Rememeber Search Engine are python program that scrape data on the internet and present it in this case in Featured Snippets or AI snippets.This is in an effort to answer a search query fast and precisely with information avaialble calibrated in their indexes. The attempt is to provide helpful information.Lets try and give a better example when you search for “time is EST” or time in Chicago a few years back you would get worldtime something dot com but in recent past and onwards you would see the query answered as 12:12 am Wednesday, 3rd April 2026 Eastern Time(ET), this not just answers the query it provided the day,date, etc in a precise no fluff answer – So what in it for both, search engines and users, user experince remians high since the query is answer correclty and quickly,which mean the next time the user is going to remember this and may come back to repeat similar search – for search engines it reduces their crawl budget for an information term and saves ad payouts for third party sites for time, calculairs etc.

Now, users often get direct answers from:

AI tools (ChatGPT, Gemini, Perplexity)

Voice assistants

Featured snippets

This shift has created two new concepts:

AEO (Answer Engine Optimization)

GEO (Generative Engine Optimization)

Both depend heavily on Technical SEO, more than most people realize.

Understanding AEO and GEO (Simple Explanation)

What is AEO?

AEO Answer Engine Optimization means optimizing your content so it becomes the direct answer shown in search results. AEO focuses on answering the search query clearly, quickly and directly in extractable answers from topic and sub topics.

Featured snippets

“People also ask”

Voice assistant answers

What is GEO?

GEO is about optimizing and structuring content so AI systems can use and cite your content when generating answers.

AI-generated summaries

Chatbot responses

Conversational answers

GEO focuses on being a trusted source for AI systems to be included inside AI-generated responses.

Bottom line AEO uses an Extraction Mechanism and GEO uses a Generative Engine.